Japanese term dictionary compilation from the Web †

Project goal †

Our goal is to recognize and handle Japanese unknown terms. We explore two research directions:

- making existing lexical resources usable, and

- acquiring terms that are not covered by existing resources.

We already have good sources of lexical information, Wikipedia in particular. However, it is not easy to utilize them in natural language applications (e.g., adversarial information retrieval and text mining) on top of natural language analysis, and one might resort to using string-based longest matching. The reason is that the unit of applications, term, is often inconsistent with word, the unit of analysis. This problem is particularly severe for Japanese because it does not delimit words by white space. In order to utilize the recent advances in analysis, we need to incorporate terms into natural language analysis.

Another problem is that even Wikipedia is far from perfect in term of coverage. To acquire terms not covered by Wikipedia, we utilize a corpus of a billion Web pages. This is much harder to incorporate than Wikipedia because while Wikipedia is moderately structured, raw Web pages almost totally lack structure. However, we can potentially extract a much larger amount of knowledge from the Web corpus.

Technical breakthrough †

Dictionary compilation using Wikipedia †

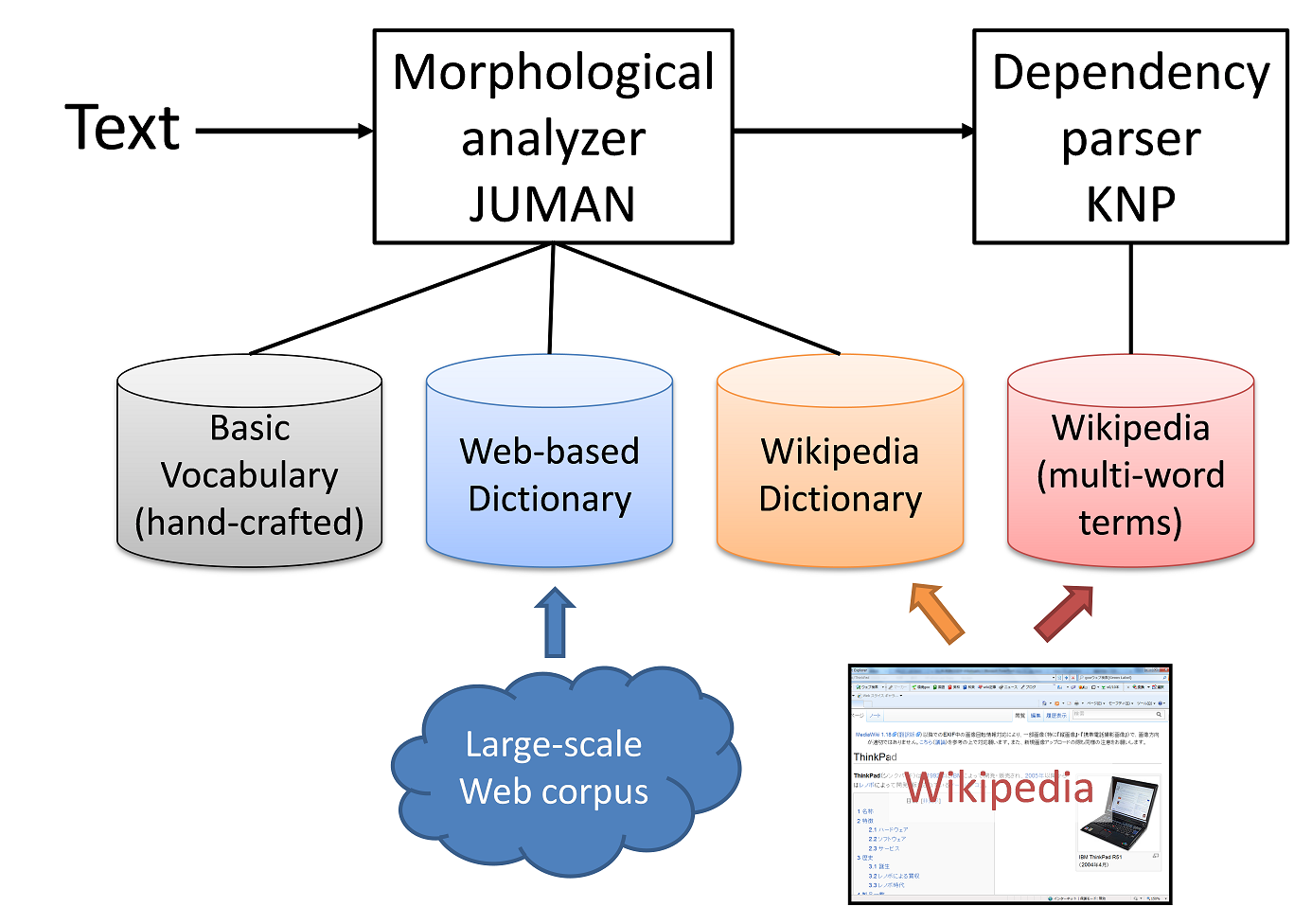

We developed a series of algorithms which took Japanese Wikipedia as input and output dictionaries for Japanese language analysis. We used each entry title as a term candidate. The first thing to do is to filter out unnecessary entries such as "2012年" (Year 2012) and "一覧の一覧" (List of lists). Next, we distinguished single words from compound words using some heuristic measures. Entry titles identified as single words were incorporated into a dictionary of the morphological analyzer JUMAN. They were used for segmenting text into words. Compound words were segmented into words and used by the dependency parser KNP. In a preprocessing step, KNP tagged each series of words that corresponds to a Wikipedia entry. As a result, we obtained 140 thousand single words and 0.8 million compound words.

Extracted terms were not just used for language analysis. In order to help applications, terms were augmented with semantic information. For each entry of Wikipedia, we extracted its hypernym from the lead sentence (e.g., "日本庭園" (Japanese garden) for "兼六園" (Kenrokuen). Using redirects of Wikipedia, we also identified spelling variants that were ubiquitous in Japanese text. For example, the Katakana script form "マツゲ" (eyelashes) is a spelling variant of the Hiragana form "まつげ."

It is difficult to evaluate the effect of the extracted dictionaries in term of the accuracy of natural language analysis because the existing gold-standard text was taken from decades-old newspaper articles. It is inefficient to fully annotate new text for evaluation because high-frequency words are already been covered by the hand-crafted dictionary and the terms extracted belonged to the "long-tail." For this reason, we performed natural language analysis with and without the extracted dictionaries and manually compared the differences between the two results. We confirmed that the extracted dictionaries generally helped correct segmentation errors.

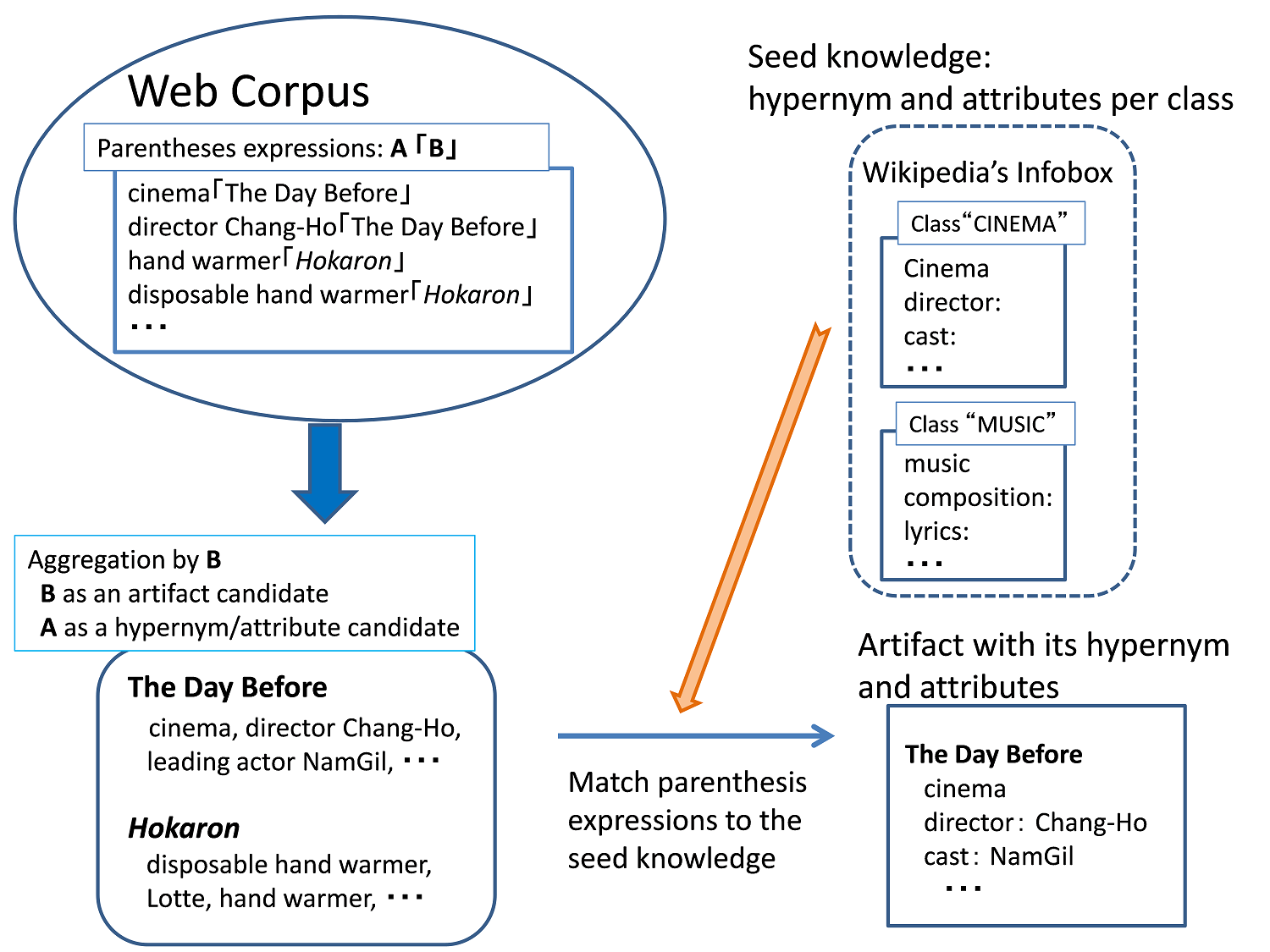

Acquisition from the Web corpus †

For the raw Web pages, we focused on parentheses expressions "A「B」" such as "映画「暴風前夜」" (cinema "The Day Before"). We treated B, the in-parenthesis phrase, as an artifact candidate and A, the pre-parenthesis phrase, as its hypernym or attribute. In reality, parentheses expressions were very noisy and we needed to choose good candidates. To do this, we utilized Wikipedia as seed knowledge. We used Wikipedia's semi-structured infoboxes, from which we extracted a hypernym and attributes for each class, or group of terms. We aggregated parentheses expressions by B, and compared the set of As against the seed knowledge to jointly identify each artifact, and its hypernym and attributes. We also set a simple fallback for undefined classes. As a result, we obtained more than a million terms from a Web corpus that consisted of 7 billion sentences (those covered by Wikipedia were excluded). By sampling the output, we estimated the accuracy of 79%.

Innovative applications †

The dictionaries extracted from Wikipedia were incorporated into Japanese language analysis. All we have to do to access to the research results is to call the morphological analyzer JUMAN and the dependency parser KNP as usual. The dictionaries extracted help select correct segmentation. Semantic information extracted from Wikipedia is encoded in the outputs of these analyzers. For example, "藍澤光" (Hikaru Aizawa) is tagged "Wikipedia hypernym:mascot (character)" while "Silverlight" is recognized as an alias of "Microsoft Silverlight."

A simplest application of the research results would be keyword matching. Even today it is not uncommon to use the string-based longest matching method. Since the existing dictionaries for natural language analysis lack artifacts, analyzers often produce catastrophic segmentation. With the new dictionaries, even newly-developed products can be recognized correctly. The application can simply read the output of the analyzers to find keywords.

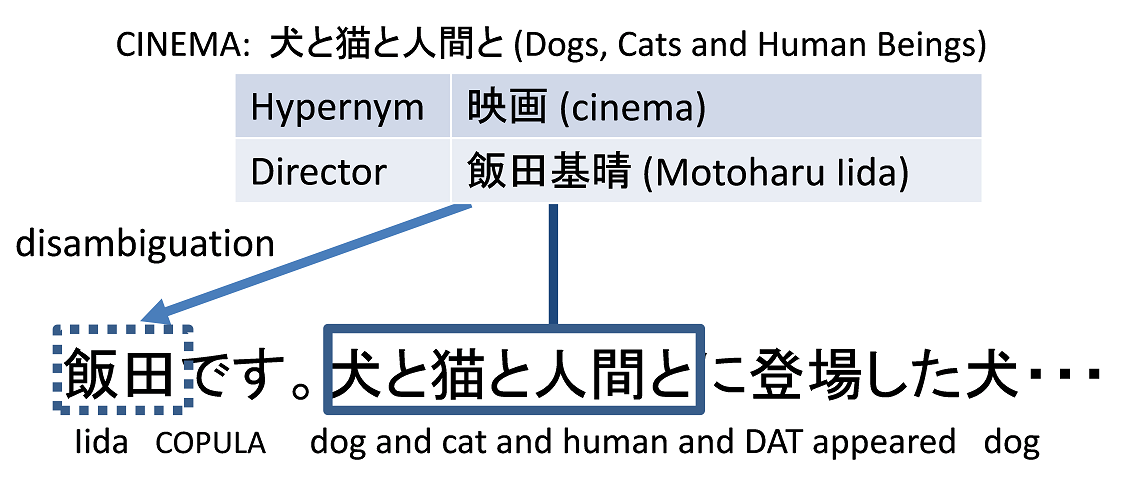

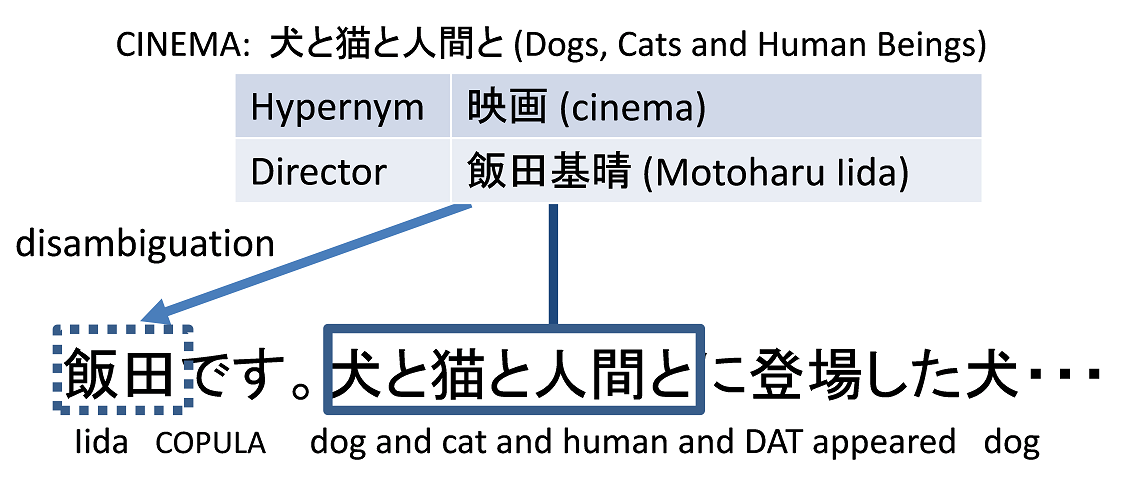

Another application is use in higher-level natural language analysis with the long-term goal of natural language understanding. In the figure, "犬と猫と人間と" (Dogs, cats and human beings) is identified as a term because it is acquired from parentheses expressions. Therefore the series of words are recognized as a single entity, and we can see that "犬" (dog) in this term does not have an anaphoric relation with the word "犬" that immediately follows the term. Also, the term is associated with the attribute "Director: Motoharu Iida." With this, we can disambiguate word "飯田" (Iida) in text, which is otherwise ambiguous referring to a place or a person.

Acknowledgement †

This project was supported by the MSR Core Program.